On February 13, 2018 Dr. Rubin presented at the Information Without Borders Conference. The working title of her talk is

The Disinformation Triangle: The Epidemiology of “Fake News”.

Draft Abstract:

“In 2018 the spread of misinformation and intentionally deceptive information in digital news and social media platforms reached unprecedented volumes. In addition to unintentional inaccuracies and errors, there are various shades of falsehoods including clickbaity omissions in news headlines, unverified rumors, misleading satirical news, advertorials, and altogether intentionally fabricated news. Such mis-/disinformation in our news streams can cause disruptions in politics, business, culture (Jack, 2017) and democracy (Owen, 2017). Researchers warn us of the potential threats to the citizens’ very ability to form evidence-based decision on matters of societal importance such as public health, climate change, and national security (van der Linden et al. 2017).

The networked nature of online media enables mis- and disinformation to spread rapidly, much like a viral contagion (Tambuscio et al, 2015). I propose a comprehensive set of measures to fight the phenomena of mis-/disinformation as a sociological technology-enabled disease of epidemic proportions. This comparison frames what has been indiscriminately called as “the fake news problem” as an epidemic that requires accurate diagnostics, containment, inoculation and prevention campaigns leading towards, if not eventual eradication of the malaise, at least reduction of harm.

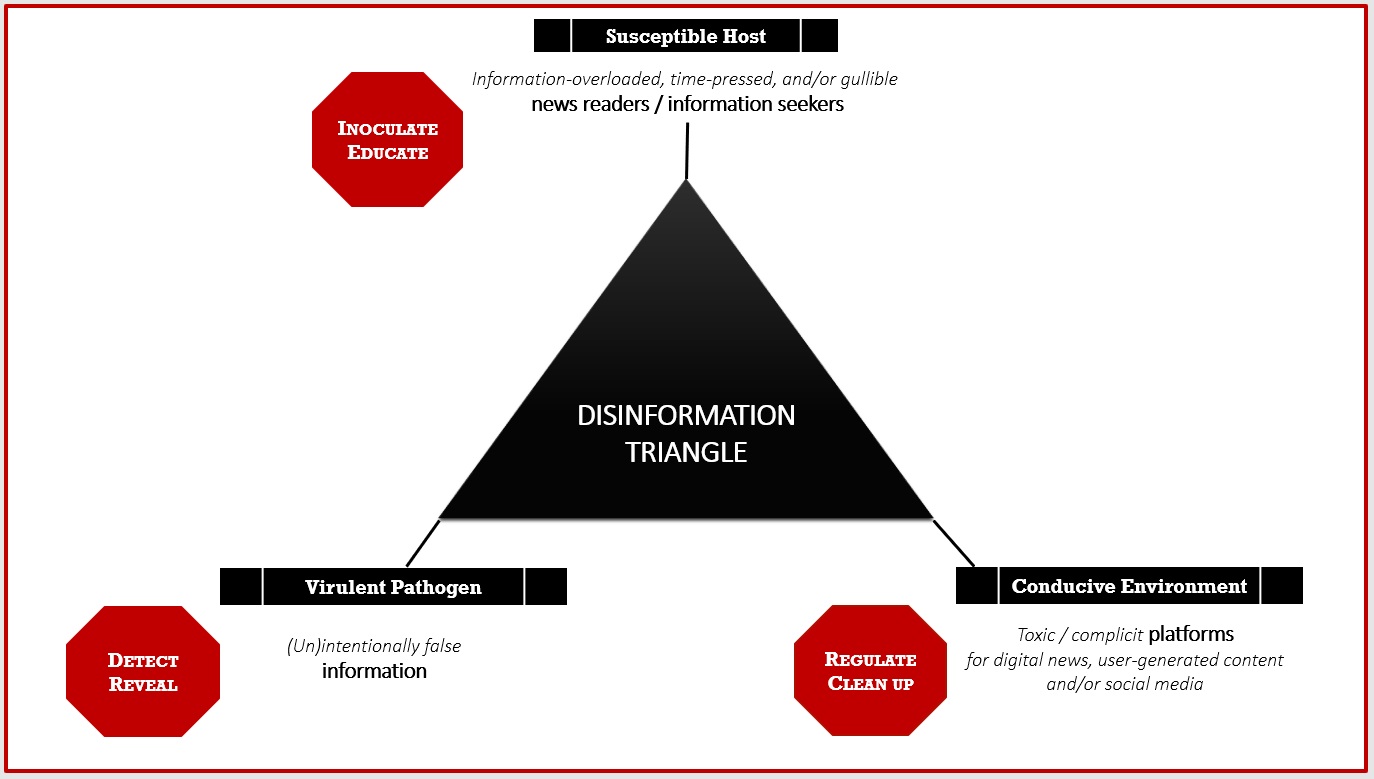

A widely used epidemiological model, known as “The Disease Triangle” (e.g., Scholthof, 2007) describes a disease as an interaction of the three causal factors: a susceptible host, a virulent pathogen, and a conducive environment. If my analogy of mis-/disinformation as a disease holds, the roots of “fake news” can be directly translated into an explanatory model of “The Disinformation Triangle”. Information-overloaded, time-pressed, or gullible news readers/information seekers are susceptible to pathogenic mis-/disinformation delivered within unregulated, monopolistic content platforms (Figure 1).

FIGURE 1. “THE DISINFORMATION” TRIANGLE

FIGURE 1. “THE DISINFORMATION” TRIANGLE

articulates causal factors and dictates a holistic tri-part solution

addressing individual elements of the mis-/disinformation epidemic in digital environments.

Studies on “cognitive inoculations” or “metal vaccines” (van der Linden, 2017) as well as concerted educational campaigns on “How to Spot Fake News” (ALA, 2016) or CNN’s “Your Own Opinion, Not Facts” Campaign (CNN, 2017) are, in essence, public awareness efforts in further training in critical thinking and information literacy. The role of the information professionals, librarians, journalists, the broader education system at all ages, is clearly visible in this fight to “inoculate” the public. We need to understand the psychology of biases, belief systems, and attitudinal change. We need to incite information seekers to read beyond the headlines and grasp intricacies in information practices when confronted with mis-/disinformation.

While technological solution to detecting “the fake news pathogens” may be in their naissance (for example, deception detectors, clickbait detectors, satire detectors, and rumor busters), such tools will likely play a more significant role in the nearest future (akin spell-checkers). Alerting news readers to potentially deceptive information and prompting them to fact-check ultimately supports human decision making in new encounters with unverified information.

Social media platforms are conducive to deceptive practices of content creators as the business model underlying their operations is based on ad-revenues; they reward virality and user engagement (click, shares, views, impressions, etc.) above quality of information, above truths. Key technological platforms – Google, Facebook, Amazon and Apple, cumulatively worth $2.17 trillion – require regulatory measures to break up their monopolistic tendencies (Taylor, 2017).

In this talk, I introduce the audience to our News Verification Browser (Rubin, 2017), currently in development at the Language and Information Technology Lab (LiT.RL). The Browser identifies “disinformation pathogens”, or namely, individual varieties of falsehoods such as falsifications, satirical fakes, and clickbait. It is based on predictive modeling techniques stemming from our work in the area of Automated Deception Detection: a cutting-edge technology that emerged in the LIS-adjacent fields of Natural Language Processing and Machine Learning around 2004-2006. Deception detection methods typically discover measurable linguistic (content-based) features in deceptive texts such as complexity, uncertainty, non-immediacy, diversity, affect, specificity, expressiveness, and informality. I will provide a brief primer to what is currently known in the field based on research in Interpersonal Psychology, Communication Studies, and Law Enforcement, about people’s overall abilities to spot lies and how those predictive cues could help algorithms to tell a liar and a truth-teller apart.

I emphasize that the methods and resulting algorithmic tools, such as the News Verification Browser, are meant to augment our human discernment, rather than replace it, by highlighting potentially false information which may require scrutiny. News readers’/social media users’ critical thinking remains the key information literacy skill for navigating the increasingly toxic online environments. The conceptual model of the Disinformation Triangle’s explanatory power is in detailing the triad of partial solutions that, in their totality, may help halt the epidemic of “fake news”.

Victoria L. Rubin is an Associate Professor at the Faculty of Information and Media Studies and the Director of the Language and Information Technologies Research Lab (LiT.RL) at the University of Western Ontario. She specializes in information retrieval and natural language processing techniques that enable analyses of texts to identify, extract, and organize structured knowledge. She studies complex human information behaviors that are, at least partly, expressed through language such as deception, uncertainty, credibility, and emotions. Her research on Deception Detection has been published in recent core workshops on the topic and prominent information science conferences, as well as the Journal of the Association for Information Science and Technology. Her 2015–2018 project entitled Digital Deception Detection: Identifying Deliberate Misinformation in Online News is funded by the Government of Canada Social Sciences and Humanities Research Council (SSHRC) Insight Grant. For further information, see https://victoriarubin.fims.uwo.ca/“.